Eric Fillion is a doctoral candidate in History. His research focuses on the origins of Canada’s cultural diplomacy and, more specifically, on the use of music in Canadian-Brazilian relations (1940s-1960s). This project builds on the experience he has acquired as a musician and on his ongoing study of Quatuor de jazz libre du Québec. He is also the founder of Tenzier, a nonprofit organization, whose mandate is to preserve and disseminate archival recordings by Quebec avant-garde artists.

Blog post

Encrypt now: Research ethics and digital security

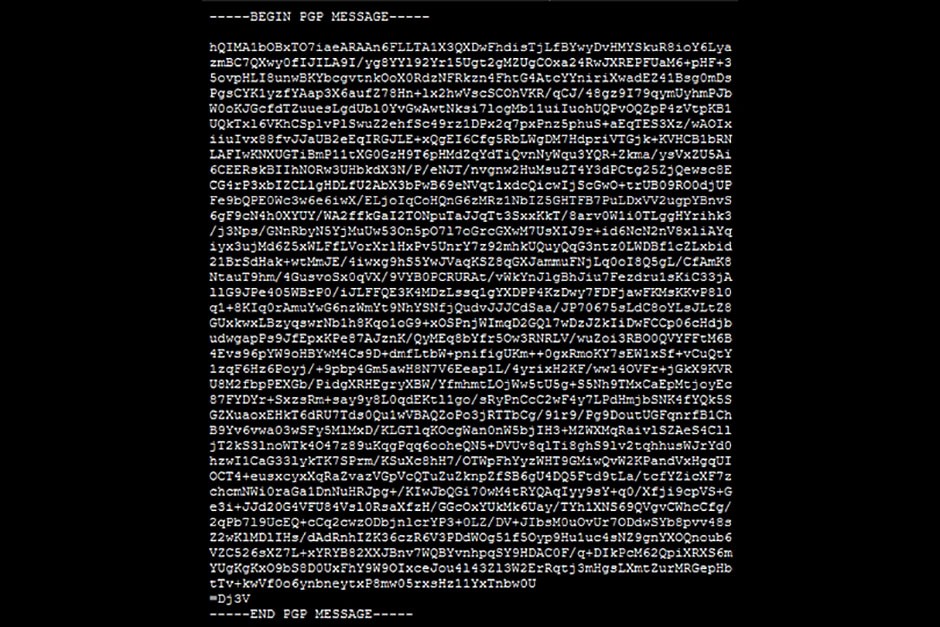

Encryption at work. | Photo: Eric Fillion

Encryption at work. | Photo: Eric Fillion

WA2ffkGaI2TONpuTaJJqTt3SxxKkt. You are not supposed to be able to decode this and that’s the point. The email from which I extracted these few words was not intended for you.

I started encrypting some of my correspondence a few years ago at the request of a colleague who felt that we should be proactively thwarting others (including government agencies and police forces) from eavesdropping on our conversations; in the same way that we would if someone wanted to tap our phones or open the letters and packages that we put in the mail. That made sense.

“Let’s do it,” I said.

I figured it was worth investing a few hours in learning how to set up encryption since I would also learn a thing or two about digital security and how it applies to my work as a scholar.

Academia does not excuse “digital sloppiness,” to borrow an expression used by the social anthropologist Jonatan Kurzwelly. Nor does working in a university prevent you from being hacked, surveilled or subpoenaed.

David N. Pellow’s work on radical environmental and animal rights movements comes to mind. In Total Liberation, he tells the story of Scott DeMuth, a graduate student at the University of Minnesota, who was jailed for contempt of court after he refused to testify in front of a federal grand jury. The authorities were trying to find out who was behind an action by the Animal Liberation Front, a group DeMuth had been researching.

The FBI and the Department of Justice subsequently contacted Pellow who then realized that his communications were probably monitored and that extra precautions were needed to protect his “research participants and their privacy, as well as the privacy of colleagues, coworkers and student employees.”

Closer to home, historian Steve Hewitt received the visit of RCMP officers while he was putting the finishing touches to Spying 101 — a fascinating study of police surveillance at Canadian universities.

Your work might not be about subversive groups or policing, but it might very well deal with topics that threaten to upset the status quo or bring about paradigm shifts in your field or within society. If not, digital security should still be at the top of your priority list if your research involves sensitive data or human subjects to whom you promised anonymity and/or confidentiality.

Assuming the above applies to you, encryption is not just a side project to undertake out of intellectual — or technical — curiosity: it’s an obligation.

Ethics

The issue of digital security is one that cannot easily be explored without first addressing the ethics of surveillance. As citizens, we have become complicit with — and acquiescent in — the rise of the surveillance society. We surrender our personal data daily for the sake of efficiency and productivity through our reliance on increasingly pervasive communications and information technologies.

Most of us do not realize “just how visible we have all become to myriad organizations,” explained the members of the New Transparency Project in 2014.

In their comprehensive report, aptly titled Transparent Lives, they write: “Surveillance is generally a technique of social power and control that relies on the easy visibility of the one being watched and the relative invisibility of the one doing the watching. It is also designed to enhance the influence of the watcher over the person or group being watched. Regardless of whether the exercise of such power is legitimate or benign, it inevitably challenges liberal democratic norms founded on citizen autonomy.”

There is an abundance of works dealing with the ethics of surveillance. The same cannot be said about the interplay between “digital sloppiness” and research ethics.

For those of us doing oral history, today’s digital landscape poses considerable challenges. In our work, we collect life stories or conduct topical interviews to gain insight into the lived experiences of individuals. We do so, in part, to acquire additional perspective on the past and the ways in which subjective narratives intersect with meta-narratives to mediate memories.

The narrators (i.e. the interviewees) are factory-floor activists, genocide survivors, war veterans, refugees, hitchhikers, community organizers, musicians, retired teachers, former diplomats, etc. The list is endless.

Many of our narrators tell their stories on the conditions of confidentiality and/or anonymity. Some go further by insisting that typescripts as well as audio and video recordings of the interviews be destroyed at some point. Our guarantees to narrators are hollow if we do not encrypt our hard drives and fail to use end-to-end encryption when sending files over the Internet.

By not addressing the issue of digital security, we run the risk of undermining the trust upon which our practice is based.

Digital Security

In the same way that Kurzwelly found ethical guidelines to be lacking in his professional association and at his home university in the UK, there is a dire need for adequate training and guidance on this side of the Atlantic.

Canada’s three federal research agencies (CIHR, NSERC and SSHRC) perform a critical role in outlining the ethical principles that should guide all research involving human subjects.

In its most recent policy statement (TCPS 2/2014), the Interagency Advisory Panel on Research Ethics notes that researchers must adopt security measures to “safeguard entrusted information” in order to fulfill their ethical and confidentiality duties. Encryption is listed as a technical safeguard measure that should be used when storing data on hardware.

Regarding cloud storage and the online sharing of files, the TCPS 2/2014 has this warning: “Research data sent over the Internet may require encryption […] to prevent interception by unauthorized individuals, or other risks to data security.” It adds: “In general, identifiable data obtained through research that is kept on a computer and connected to the Internet should be encrypted.”

The TCPS 2/2014 makes it clear that the onus is on researchers to demonstrate to the Research Ethics Board of their university that they can fulfill the commitments made to participants. That said, it also underlines that “[i]nstitutions shall support their researchers in maintaining promises of confidentiality.”

There is a pressing need here for the Office of Research and the School of Graduate Studies’ GradProSkills program to work with departments and affiliated research centres in preparing new students to tackle, head on, the challenges of digital security. New research guidelines need to be developed and rapidly implemented. Communications and archival procedures need to be updated. Workshops and hands-on support need to be provided to scholars.

Such initiatives would benefit researchers in all disciplines, not just historians collecting testimonies.

4yrixH2FK

In the meantime, we can — and should — be proactive as individual scholars.

Surf the web to find out more about digital security’s best practices and to learn tips on how to fulfill your ethical and confidentiality duties as researchers. The Electronic Frontier Foundation’s Surveillance Self-Defense guide is a good place to start.

The Centre for Investigative Journalism has published an excellent handbook titled Information Security for Journalists. The Free Software Foundation’s Email Self-Defense guide will walk you through the steps of setting up encryption on your email account.

Whatever tools you use, be sure they are open-source software. Unlike proprietary software, open-source software is audited by privacy activists who can identity vulnerabilities or expose “backdoors” that allow individuals and organizations to access your data without you knowing it. Open-source software is also generally free so that should serve as an additional incentive if one is needed. Be generous and donate to support the people who develop, maintain and upgrade these tools.

Making the transition towards a more secure digital landscape is not as daunting a task as it may seem, although it does require patience and a willingness to learn, adapt and keep apace with evolving technologies. Why not make this your personal resolution for 2019?

** I wish to thank Dj3V for the lively discussions and encrypted correspondence, which inspired the above text.

About the author