Concordia Professor Chris Salter talks about Milieux Institute’s participation in Ars Electronica

Chris Salter, Professor of Computation Arts, Design and Computation Arts and Co-Director, Hexagram Network for Research-Creation in Media Arts, Design, Technology and Digital Culture, will co-deliver a keynote conversation at Ars Electronica this year.

Chris Salter, Professor of Computation Arts, Design and Computation Arts and Co-Director, Hexagram Network for Research-Creation in Media Arts, Design, Technology and Digital Culture, will co-deliver a keynote conversation at Ars Electronica this year.

The EMERGENCE/Y garden at the Ars Electronica festival in Linz, Austria, marks the launch of the Hexagram Network’s 2021-2022 edition of Rencontres Interdisciplinaires. Running from September 8 to 12, Ars Electronica is the world’s largest festival focused on art, science, technology and society. The gardens are the virtual compliment to the festival’s in-person events.

Members of Concordia’ Milieux Institute for Arts, Culture and Technology are participating in this year’s event, including Computation Arts Professor Chris Salter, the Institute’s associate director, who will be delivering a keynote conversation with Associate Professor of Contemporary Dance Angélique Willkie (who is also Milieux's LePARC cluster co-director and Chair of the President's Task Force on Anti-Black Racism) together with seminal new media artist David Rokeby.

Salter is also presenting an online version of the large-scale multi-sensory immersive work SENSEFACTORY, inspired by Moholy-Nagy’s Sketch for the Mechanical Eccentric, and the general ethos of the Bauhaus movement, followed by the question: What would Bauhaus artists create with the resources of today?

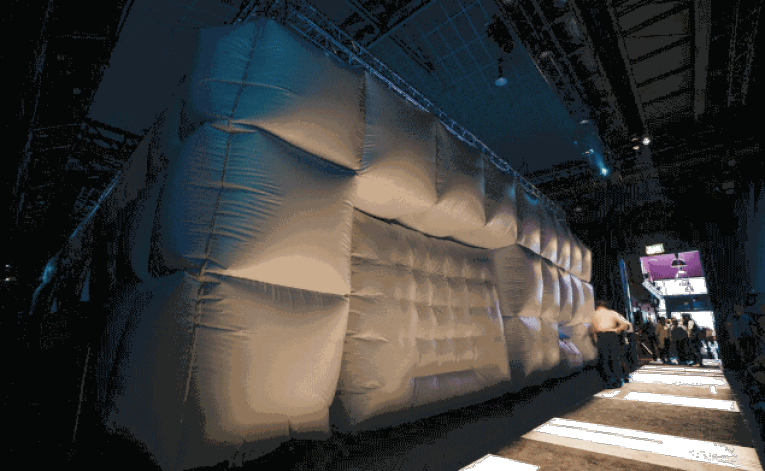

Salter and his team presenting SENSEFACTORY at Ars Electronica at the EMERGENCE/Y Garden hosted by the Hexagram Network. Photo by Sebastian Lehner

Salter and his team presenting SENSEFACTORY at Ars Electronica at the EMERGENCE/Y Garden hosted by the Hexagram Network. Photo by Sebastian Lehner

You’re part of the team behind the collaborative project SENSEFACTORY. What can you tell us about that project?

Chris Salter: SENSEFACTORY came out of an interest around Moholy-Nagy’s [Score Sketch for the] Mechanical Eccentric, which was a 1923 graphic score for a theater of machines that would stimulate all of the human senses but was never realized. We thought, this is an interesting period in history, and how we think about a theatre of machines, wherein the human body is not the centre of the world anymore (or so we might like to think). These avant-garde European thinkers in the 1920s, of which the Bauhaus is a prime example imagined the new socialist world that was emerging—and it was a socialist world that came deeply out of, not replacing the human, but emphasizing the capabilities of the human by putting those machines there.

We created a kind of social play space where you became aware that something is happening that is greater than oneself. A kind of social dynamic emerged; people realized that their senses were not individual but somehow exercised collectively. It became something in the Bauhaus tradition with a level of creative expression that came not only from the creators but from the participants.

Scent, in particular, is so sensitive: it is heavily triggering, but it is also notoriously difficult to narrate or direct. Contemporarily, getting COVID-19 meant for many people losing their sense of smell, and consequently their sense of taste.

CS: There’s a whole chapter in my upcoming MIT Press book Sensing Machines, about e-noses and e-tongues, about the simulation of taste and smell that’s happening. What’s going on with research is completely insane! It entails these questions like, “Can you have a tongue without a brain?”

Scientists and engineers are essentially creating fragmented, imperfect brains out of software – for instance, these artificial noses and tongues interpret and classify scents and tastes based on mathematical models – artificial neural networks. Biological taste buds are incredibly sophisticated because they don’t have a kind of selectivity - it’s the brain that breaks down what sour, bitter, sweet and salty is, not the taste buds. The taste buds are the most sensitive tactile elements of the body, and they are constantly growing and dying, being replaced all the time.

The theme for the Hexagram Network’s Ars Electronica Garden is EMERGENCE/Y, and the topic for your collaborative keynote discussion, with David Rokeby and Angélique Willkie, is machine-body interaction. How do notions of EMERGENCE/Y afford an understanding of the current state of machine-body interaction?

CS: I just wrote an essay on the generative trained pre-transformer models (the so-called GPT3 models) released by Open AI, which have gotten a lot of hype because people are like, “Wow, machines can now generate not only sentences, but full paragraphs that sound like humans wrote them!” They sound like human language but there is no human that has produced this sequence of words.

I’m convinced that our whole way that we live in the world is based not only on what our brains do, but what our whole holistic system does. What’s interesting is that as we develop machines that we increasingly call intelligent—which is a very problematic word because psychologists, cognitive scientists, neuroscientists, linguists don’t really know what the definition of human intelligence is.

So we don’t really know what human intelligence is but we want to make an intelligent machine, which is a little bit of a problem. If you look back at the early histories of artificial intelligence, however, they have a really specific understanding of what intelligence is: It is the idea of logical thought-processing, that humans make sense of the world through logical propositions, and we can model those logical propositions in the workings of the brain. We can then build machines that operate in those same logical structures, and therefore “simulate” intelligence. The problem with all that is that human beings are finite, and machines are not—theoretically.

Find out more about the Hexagram Network’s EMERGENCE/Y garden at Ars Electronica.

Learn more about the Milieux Institute at Ars Electronica.